Apple today previewed a range of new accessibility features, including Door Detection, Apple Watch Mirroring, Live Captions, and more.

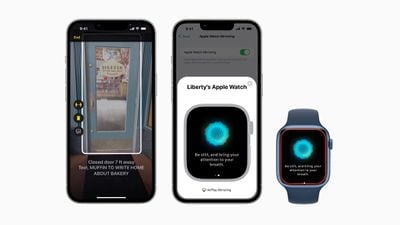

Door Detection will allow individuals who are blind or have low vision to use their iPhone or iPad to locate a door upon arriving at a new destination, understand how far they are from it, and describe the door's attributes, including how it can be opened and any nearby signs or symbols. The feature will be part of a new "Detection Mode" in Magnifier, alongside People Detection and Image Descriptions. Door Detection will only be available on iPhones and iPads with a LiDAR scanner.

Users with physical disabilities who may rely on Voice Control and Switch Control will be able to fully control their Apple Watch Series 6 and Apple Watch Series 7 from their iPhone with Apple Watch Mirroring via AirPlay, using assistive features like Voice Control and Switch Control, and inputs such as voice commands, sound actions, head tracking, and more.

New Quick Actions on the Apple Watch will allow users to use a double-pinch gesture to answer or end a phone call, dismiss a notification, take a photo, play or pause media in the Now Playing app, and start, pause, or resume a workout.

Deaf users and those who are hard of hearing will be able to follow Live Captions across the iPhone, iPad, and Mac, providing a way for users to follow any audio content more easily, such as during a phone call or when watching video content. Users can adjust the font size, see Live Captions for all participants in a group FaceTime call, and type responses that are spoken aloud. English Live Captions will be available in beta on the iPhone 11 and later, iPad models with the A12 Bionic and later, and Macs with Apple silicon later this year.

Apple will expand support for VoiceOver, its screen reader for blind and low vision users, with 20 new languages and locales, including Bengali, Bulgarian, Catalan, Ukrainian, and Vietnamese. In addition, users will be able to select from dozens of new optimized voices across languages and a new Text Checker tool to find formatting issues in text.

There will also be Sound Recognition for unique home doorbells and appliances, adjustable response times for Siri, new themes and customization options in Apple Books, and sound and haptic feedback for VoiceOver users in Apple Maps to find the starting point for walking directions.

The new accessibility features will be released later this year via software updates. For more information, see Apple's full press release.

To celebrate Global Accessibility Awareness Day, Apple also announced plans to launch SignTime in Canada on May 19 to support customers with American Sign Language (ASL) interpreters, launch live sessions in Apple Stores and social media posts to help users discover accessibility features, expand the Accessibility Assistant shortcut to the Mac and Apple Watch, highlight accessibility features in Apple Fitness+ such as Audio Hints, release a Park Access for All guide in Apple Maps, and flag accessibility-focused content in the App Store, Apple Books, the TV app, and Apple Music.